When I started studying the peculiar world of videogame development, I soon met the confusingly named Unity3D as the reference tool used by many practitioners of the black art of game creation. I (wrongly) classified it as a tool for those in realistic 3D games (a style many in Indie games today don’t particularly love – “We’re all effin’ tired of 3D”) telling myself “it must be a kind of Maya for games”. So I ignored it, as at the time the core of my interest was the game design dimension and not the development details, left to poorly paid slaves when needed (by which I mean myself, of course).

It took me a long time to understand enough of videogames in order to “get” Unity, and begin using it. And to answer to the question “Why is Unity so popular in videogame development?” it took me learning beyond the basics of Unity development.

Once you know why it works, you see that it is such a brilliant solution that you will hardly stop to explain anyone why it works: you will be now dedicating your time to creating games! But in my overwhelming generosity (you may call me from now on “Your Majesty”), I will stop for a little and give you the key to this realm, full of treasures once inside.

What is Unity?

Wikipedia defines it so:

Unity is a cross-platform game engine with a built-in IDE developed by Unity Technologies. It is used to develop video games for web plugins, desktop platforms, consoles and mobile devices.

IDE stands for Integrated Development Environment: Unity actually is the union of

(1) a game engine, that allows game created to run (hence to be played) in different environments,

(2) an application where the “visible pieces” of a game can be put together (the IDE) with a graphical preview and using a controlled “play it” function, and

(3) a code editor (the one provided is MonoDevelop, but developers can use others; yes I know that code writing is usually classified as part of the IDE, but for the sake of clarity of what follows, let’s keep it as a separate piece).

So once you have the game design and all the required skills you can put together graphics, sounds, animations in Unity’s IDE, write in the editor the code associated to the assets and then generate a playable application that runs in several different environments, as one of the features of Unity is that it is multi-platform, that is more or less the same game can run similarly on an iPad or on Windows, say.

The process I just outlined is intrinsically quite complicated – videogames are complex structures. Unity provides a complete workflow and a lot of help along the line, and a large set of features come in Unity’s free version (see below). But IDE for games have been around for quite a while – why is Unity special? We’ll see that in the following.

Notes.

Is it Unity3d or Unity? The “3d” extension is common in referring to Unity and is actually the Internet domain where you find the application (https://unity3d.com/), but the software is more properly called Unity, as it is no longer tightly bound to 3D games. And so is now called by its producers.

By game asset or asset in the following I will mean an image, model, animation or sound used in creating games (so slightly more restrictive with respect to what is intended in Unity documentation).

In order to make some technical points without excluding non developers, technical parts are written in code style and can be skipped.

A pragmatic tool

First of all Unity is mostly free as a development tool, and completely royalty free. But its clearly making loads of money for its producers – it’s a working ecosystem, with over a million developers registered to Unity.

Mostly Unity is popular because… it works. Not in the limited sense that it outputs working games (sometimes it does  ), but in the sense that it works with and for people making games, also those that have limited budgets (“indies”); this is achieved by a set of clever ideas, in balance between doing not too little nor too much. Let’s see some of those ideas.

), but in the sense that it works with and for people making games, also those that have limited budgets (“indies”); this is achieved by a set of clever ideas, in balance between doing not too little nor too much. Let’s see some of those ideas.

The public variables “hack”

I’m putting this feature on top because it is so simple and powerful, and presents a core relationship.

When you work in Unity (actually in any game development environment) you need to switch two mindsets: one is when you are in the IDE, and you have assets, can make the game run and have graphical previews, and another when you are writing code, which is a purely linguistic / logical / sequential universe.

In games you make your game characters and objects behave and reason in some form by writing code (and animations, but that as a state machine can be considered code too). So the main problem there is how to direct the assets from code, in a way that is practical, flexible, and as safe as possible.

By “safe” it is meant that the environment should somehow warn the developer as early as possible that a link from code to assets is or could be broken.

To direct the assets, e.g. move them, make them collide, transform and sing, you first of all need to have a way to “get” them in code. This is simply done in Unity by

(1) dragging your code script on the main object on which it acts, e.g. drag the singing instructions on the singer character: this way you’ll see in the IDE character the plugs which you exposed in the code, and

(2) in turn you set values to those plugs by dragging a game object on them.

So for example if you drag the singing script on the singer character, if you exposed the variable “instrument” in the singing script, you can tell Unity which instrument the singer is playing by dragging the instrument on the script part of the singer character.

So in code you can create logics around objects that will be simply be set by dragging objects in the IDE. This is the trick, which allows quick tests, prototyping, and balancing while you are building the game. This is also a way to learn how Unity works, and is the simplest way to connect assets, graphics and play with code writing!

It is so easy that it may have unintended consequences, but it is a great help to start.

Symmetrically to this idea, any component added to a game object through the IDE is then recoverable via code and available for logical conditioning; for example, if you add audio to a game object, this audio element is immediately available in code.

Unity tries different tricks to help in this process of binding assets and code: like Unity takes the names you give in code to assets (public variables on classes) and tries to render them in the IDE in human readable form; I was so surprised to see the enemyShip variable presented in the IDE as “Enemy Ship”, as in the picture above.

More you learn about Unity and refine your projects and code writing, less important and used will the feature here described be, because you need to completely direct instantiation and flow through code. But for rapid prototyping and debugging this feature is simply great; and how you lead beginners into such a complex process as is game development is not a secondary feature.

Note. The well-read Daniele Giardini points out that you can actually expose private variables to the IDE with SerializeField – but that is way beyond the scope of this post.

Components vs. single-inheritance

A great idea embedded in Unity is the fact that (in non technical terms) extension is mostly by association and not by hierarchical inheritance. This principle allows for great flexibility (and possibly also chaos in the medium run, of course). So for example if you drag a dragon image in your first 2D cave (say its the first level of your game), this results in a sprite; to this sprite you can add animation, physics and behaviour (the latter through scripts), even change name and position in hierarchy, but the same object remains the same. Nothing will be broken in the code manipulating your object by adding yet another component to it, say audio.

This can be summarized as Unity elements, “game objects”, are component based.

Once got this point, you quickly see how working with Unity can greatly facilitate making people with different skills work together and at different times, without interfering with each other’s work.

A workflow based on separation of concerns

A way of looking at Unity is as a game production central workflow station: developers, game designer, graphical designers, modelers, audio guys all get to send their stuff to Unity in a quite comfortable way (maybe that is why it is called “unity”?).

Unity actually presents its workflow quite well here https://unity3d.com/unity/workflow

“Unity can import 3D models, bones, and animations from almost any 3D application (see the bottom of this page for an extensive list of supported 3D programs). Hit Save in Maya, 3ds Max, Modo, Cinema 4D, Cheetah3D or Blender, and Unity instantly re-imports the updated asset and applies changes across the entire project.”

And with Unity 4.3 (latest release at the time of writing) the 2D workflow got simplified and empowered. Similar flexibility is true for audio too. As the Unity fellows present as example, “Save your multi-layer Photoshop files, and Unity automatically compresses your images with high quality compression.”. instead of trying to replace specialized applications, the idea here is integration.

Unity’s slogan could be: anything I can do to facilitate trans-media talk I will do.

Live edit at runtime

At every moment of gameplay, your game is in a state: the assets are in a position in space, the points have a value, the flying pots have a momentum. Now it is very hard to imagine how a complex system will behave without trying it (even Da Vinci got it wrong at times), and a great way to make it work is to fix things while testing them (for those in web development: how nice it is that today you can test CSS and HTML by modifying the source “live” in the browser, no refresh needed?).

Now Unity allows you to inspect and change state when you run your game in the IDE. It even gives you a structural view of the game assets changes while playing! This is another very practical aspect of developing in Unity.

Unity makes debugging from code breakpoints much less needed by allowing in play variable checking and editing. You can balance the game by playing in the runtime version trying new values live. Now it also lets you debug variables classically by launching the entire game from a debugger, and hence setting breakpoints; but that is not the main way to proceed.

There are more senses in which Unity supports fixing things while the game is being developed (just to bluff some methodological knowledge – this is compatible with “agile development”): it lets you reorganize the assets in folders seamlessly, just by dragging things around, without breaking any of the cross references in your project. You see, when developing games you are bound to end up with a lot of stuff around (graphically: scenery, characters, objects, and then animations, sounds, models, scripts, levels…) and reorganizing stuff safely on the fly is practical – again.

Unity manages the notion of prefab, a reusable template that you can use to create instances initially inheriting all the components of the prefab, then each instance can have its state evolve independently. You can create prefabs any time from any object – so that you can change your mind any time and also try things before making them templates.

By adding temporary instances of prefabs in the IDE you get visual and runtime preview of the game objects, giving you a visual cue of the process before it becomes entirely programmatical.

Handling two timelines

Time in games is a relevant dimension. Being Unity a cross-platform engine, and given that any platform comes in different versions and computing power, you have the problem that redesigning the screen (frame rendering) and computing physical event and transformations (physics steps) may vary and hence the same game may be say easy or difficult depending on where it is published.If you have asynchronous events (as you are bound to have in games) they may relate differently depending on the platform, resulting in incoherent gameplay. And finally depending on the platform you may have a varying correlation between making a physical step and re-rendering on video.

Unity gives you built-in tools for systematically dealing with these problems, by allowing to pick reacting on fixed time intervals or on frame rate, depending on the platform. It also allows you to tweak the rendering grain in function of the available resources – all this for free.

In this picture, F are screen redesign and U are physics steps, shown as differently correlated in different environments. See the full explanation here: https://answers.unity3d.com/questions/10993/whats-the-difference-between-update-and-fixedupdat.html

Colliders, rigid bodies & kinematics

I won’t enter in any technical detail: let’s just say that physics in games is a world of creativity and a creative relation with Newtonian mechanics. So game designers require both the engine to be physics aware and to be capable of going beyond those constraints. Moreover players today are familiar with a logical language of physics for 2D (again, partially unconstrained from Newtonian laws) that must be respected in game design for 2D.

Unity by separating the notions of physical body and collider (a shape around your object that is sensitive to collisions), allows collision detection without necessarily reacting on the base of mass and gravity. Moreover it natively support two kinds of physics, one for 3D and one for 2D.

And there is much more, distinguishing between static, kinematic colliders and their shapes. Who could ask for anything more?

Unity 2D

Unity is being used for all kinds of games, 3D, 2D, mixed approaches. I fear that those that see Unity’s approach to 2D as “too complex” simply haven’t seen through the complexity of any kind of game; a 2D game is conceptually not much simpler than a 3D one.

Now that Unity supports specific 2D projects, it has also ease of first access to 2D. But actually several of the 2D settings are nothing but tweaking of the 3D ones, and in this way Unity supports mixing the two approaches.

The notion of (possibly multiple) camera and lights makes perfect sense also for 2D worlds, and is a powerful and (indeed  ) enlightening conceptual tool.

) enlightening conceptual tool.

Documentation quality: multilanguage, community…

In the emergence of “winners” in new technology fields I have seen repeatedly these features exhibited by the winners: (relative) ease of first access, good documentation and architectural flexibility. Unity not only has an extensive high-quality reference documentation (user manual and references) and tutorials, but there is an extensive set of books and tutorials provided by third parties.

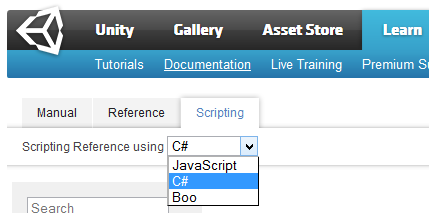

Documentation is provided with code examples for the three languages supported by Unity, JavaScript, C# and the mysterious Boo.

Unity’s support for C# means getting the static check safety of Java while having superseded many of Java’s impractical aspects. It also means not having to deal with memory management, at least at prototyping level. It is yet another positive aspect of this tool.

But today documenting a rapidly evolving tool and its environment, means having ways to get interactive discourse on the tool. And Unity provides that too, through a community managed Q&A and forums.

Components and IDE extensions: the Asset store

Another great Unity feature is that it is in large part not being built by the Unity guys :D.

This because there is huge and ever expanding Asset Store, where you can download (sometimes for free, sometimes buying) not only game assets, but also components, that is, functional extensions and ready made solutions that integrate in Unity.

And integrate means not simply having additional scripts available, but actually extending the IDE, its menu and components with additional entries and panels, corresponding to new and / or extended functions that cover specific needs.

Example: ropes and cranes. Recently I was discussing how to model Leonardo da Vinci’s ideas about cranes in Unity, and then in the Asset Store I searched for components that deal with rope and their dynamics: of course I found several.

Another example: want to have a complex dialogue system? Maybe your dream is to finally make branching narrative fun? There is a load of components ready for that. In this nice post Owlchemy Labs present how they used a few to quickly implement complex narratives.

Want AI (Artificial Intelligence) for your NPCs (Non Player Characters)? One component in hundreds is Behave 2, that provides also a visual editor (yes, inside Unity) and state of the art AI support. I could go on and on.

The icing of the cake is ease with which you can extend the environment itself, so that it may be used for your own project maintenance and debugging (here you see examples from Goscurry ‘ project kindly provided by Daniele Giardini).

Not doing too much, not doing too little

Given the complexity of the game matter, for any general tool there is always the problem that on one side for whatever you will do, there will be a more specialized, harder to use and more powerful tool, and on the other, your tool is useful because it covers all aspects of game making. Keeping a balance between two sides must not be easy, and in Unity this is mostly done by allowing progressive enhancements.

Like you can’t do any real 3D modeling with Unity, still it lets you create cubes and spheres, so that you soon get an idea of how the scene should work. You can quickly do drafts and refine progressively; not too much, not too little.

While Unity structurally marries separation of concerns, importing assets in a wide range of formats, still you can do working multi-platform game prototypes remaining inside it.

A large universe that is expanding

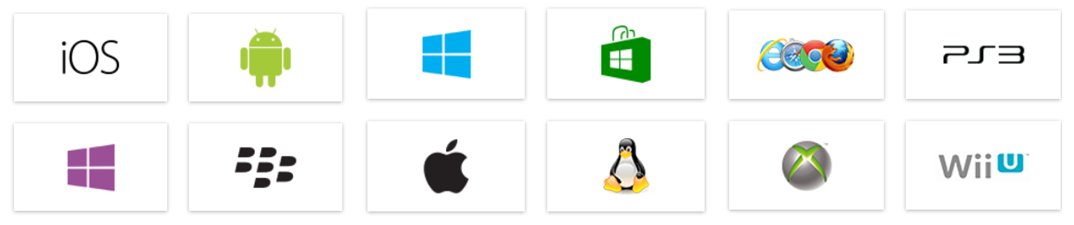

One aspect is that Unity is a game engine and editor that publishes almost anywhere. What does it mean “everywhere”? One can divide the game world in four continents: consoles, smartphones, desktop (installed), browser. Unity runs in most “countries” from all these continents – as shown in the picture above.

Given the multiplication and progressive diversification of consoles / mobiles / microconsoles Unity seems to be more and more the right choice. Say now its time for “microconsoles” to emerge:

“Microconsoles are little game consoles promising to deliver a new kind of gaming relationship between you and your TV” – Tadhg Kelly

well in the case of Ouya you have already full, documented support for Unity development. Same from Microsoft:

Unity and Microsoft will now be working together to bring the Xbox One deployment add-on to all developers registered with the ID@Xbox program at no cost to the developers (here).

Unity provides an abstraction layer over hardware, and in this sense it is also a “life insurance” for game projects: consider that games can take a loooong time to get done, and building in Unity you are somewhat safe with respect to changes in hardware and console’ hypes.

Possible negatives

Negatives are symmetric of the positives: ease of adoption and wide coverage could generate the belief that making any kind of game is easy and cheap, leading to problems in the medium run. For example, public variables set to instances through the IDE is useful in very simple projects, but can lead to messy code in the project lifetime.

But the main problem to me seems the lack of affordable competing products, which is not reassuring.

2015 UPDATE: In 2014, “Epic Games drastically cut the cost of licenses to its Unreal Engine”: this means that Unity has an affordable, good quality competitor, in particular for 3D games. This is good news for all.

ADDENDA for version 4.6

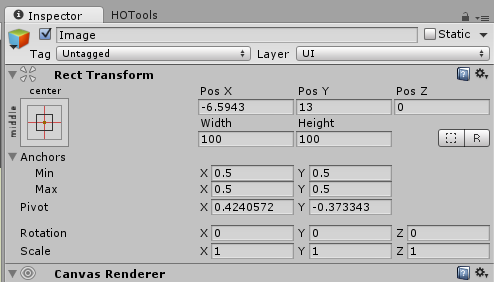

In summer 2014 Unity 4.6 came out with a new support for creating user interface elements in, above or separately from the gameplay environment: Unity new UI. This support was previously lacking and to get decent results one had to resort to plugins. Unity users had to wait so long that there was widespread skepticism about this UI coming out and being well made, but actually it is now one of the best part of the solution.

In summer 2014 Unity 4.6 came out with a new support for creating user interface elements in, above or separately from the gameplay environment: Unity new UI. This support was previously lacking and to get decent results one had to resort to plugins. Unity users had to wait so long that there was widespread skepticism about this UI coming out and being well made, but actually it is now one of the best part of the solution.

Conclusion

I think it should be clear that Unity’s popularity is not due to hype or marketing, but that there are simple, pragmatic reasons.

Unity became possible as available memory and resources increased so much that memory tweaking lost a primary place in the game development workflow (but see here). Unity facilitates agile game creation, allowing continuous releases and quick prototyping, once you got the gist of how it works.

Links and people

The following posts were useful in writing this one:

- https://forums.tigsource.com/index.php?topic=28113.0

- Creating on the Cheap with Unity

- https://slashdot.org/topic/cloud/how-unity3d-become-a-game-development-beast/

- https://forum.unity3d.com/threads/187960-Why-is-Unity-so-incredibly-difficult-to-work-with

- https://gamedevlife.com/analysis/why-choose-unity-3d-part-2/

- https://slashdot.org/topic/cloud/how-unity3d-become-a-game-development-beast/

- https://www.codeproject.com/Articles/682834/So-you-want-to-be-a-Unity3D-game-developer

I wish to thank for feedback on first draft the life gurus Daniele Giardini and Stefano Cecere. Responsibility for any mistake remains entirely yours, dear reader, so point them out and I’ll fix this post 😉 The cover image is from Year Walk. Other images are either from Unity’s site or from the IDE.

On my Twitter stream I talk about about games, game dev, gameplay and game design, mostly applied to ideas that come from history and science (hope its not as boring as that may sound).

I wonder if you ever tryed gamemaker and if you found it useful for first iterations and protos. I’m an amateur board game designer and i find gamemaker useful for early playtesting the math around the game mechanics. A comparison article between the Unity platform and Gamemaker would be enlightening.

Actually I installed it last week – there was a limited time special offer. Really cool idea, will work on it. Thanks!

Very interesting! I work with Alternativa3D, but this engine look very cool for create great games!

How do you think Unity compares with ShiVa 3D? I’ve read some buzz on 3D dev forums comparing the two developments environments and their workflows but nothing really in depth. I would love to see a full comparison between the two because I’m actually at a crossroads with them. It’s tough too because I’m waiting for ShiVa 3D v2.0 to come out later this year in 2014 to really see where both environments stand. I’m primarily developing for iOS and Android

That was a GREAT article. Just the primer I needed. Thanks for posting.

Lynda.com has added Unity to it’s list of courses. I am going to dive in and give it a go.

Cheers.

Now Unity is available also for Playstation 4 development: see here http://blogs.unity3d.com/2014/06/16/unity-for-playstation4-is-here/